The Gulf Between Knowing and Prompting

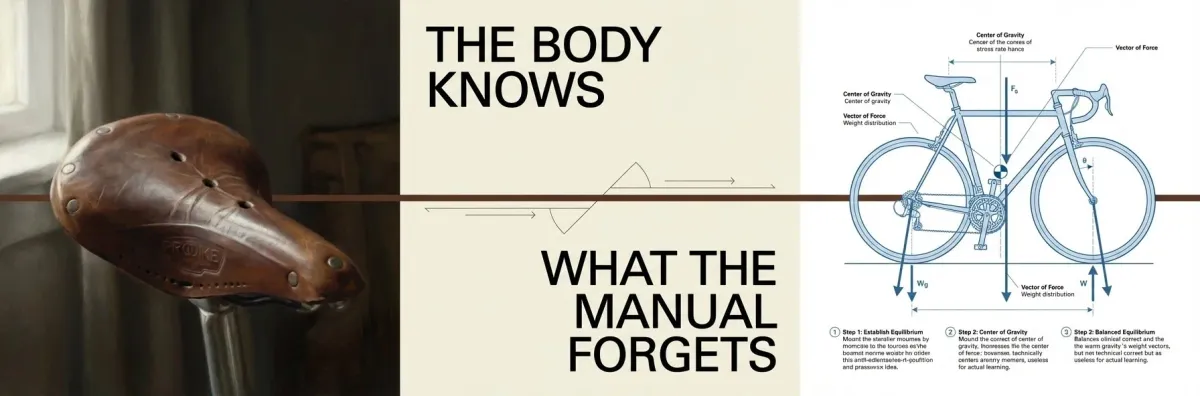

Most of what experts know has never been put into words. It didn’t need to be. Colleagues share context. Body language signals uncertainty. Mutual adjustment corrects misunderstanding before anyone notices it happened. Decades of professional knowledge live in this space: understood, acted on, never articulated.

Then AI arrived and started asking.

Researchers call the resulting collision “the gulf of envisioning” (Subramonyam et al., 2024). The term is academic. The experience is not. You know exactly what you want. You sit down to instruct the machine. The words don’t come. Not because the knowledge has disappeared, but because it was never in language to begin with. What you’re encountering is tacit knowledge: the things you know but have never had to say. AI doesn’t understand context, doesn’t read body language, doesn’t adjust mid-sentence. It needs everything spelled out. And it turns out that “everything” is far more than most people expect.

Why Even Experts Struggle with AI

The pattern holds regardless of seniority or intelligence. I’ve seen it with C-suite executives, with engineers, with designers. The gap is not about competence. It is about the nature of the knowledge itself.

Communication professionals tend to fare better. They spend their careers translating the implicit into the explicit: simplifying complex ideas into briefs, identifying unspoken client needs, clarifying what someone means rather than what they said. These skills transfer directly to AI interaction. What used to be a professional specialty has become a general requirement.

But even they hit limits. Tankelevitch et al. (2024) found that effective AI use demands metacognition: thinking about your own thinking. Articulating goals you have never stated. Analyzing processes you normally execute on instinct. Evaluating results against standards you have not previously defined.

No wonder people find it exhausting.

Automation Bias: The Danger After the Struggle

The exhaustion is worth paying attention to, because it creates a secondary problem that most adoption conversations ignore.

When instructing AI is cognitively demanding, people have less energy left to scrutinize what comes back. Researchers call this automation bias: the tendency to accept AI output uncritically. The harder the prompting, the weaker the filter on the result.

But the struggle phase is actually the safe phase. When prompting is hard, people stay engaged. They notice errors. They push back. They rewrite. The danger comes later, when users become fluent enough that the interaction feels effortless. The cognitive vigilance drops. The outputs look right. The user stops checking. And the tool, which has no capacity for judgment, continues producing with the same confident fluency whether it is correct or wrong.

Organizations that measure AI adoption by how smoothly people use it are measuring the wrong thing. Smooth can mean fluent and critical. It can also mean passive and unquestioning.

Why AI Prompting Frameworks Help (and What They Can’t Do)

In workshops, I’ve seen something consistent: when people receive even a simple framework for AI interaction, a set of questions to consider before writing a prompt, the struggle becomes productive instead of demoralizing.

In one session, a participant who had called herself “AI-resistant” used a framework that asked her to clarify her audience, her desired outcome, and her evaluation criteria before prompting. By the end of the session, she had run multiple successful interactions. What changed was not her ability. It was her ability to articulate what she already knew.

I should be transparent: I teach these frameworks in my workshops. That creates an obvious alignment between my professional work and my argument that frameworks help. Take my conviction accordingly.

But the value I observe is not about better AI output. It is about what happens to the person using the framework. Clarifying your intentions before interacting with AI strengthens the same metacognitive muscles that improve decision-making, communication, and judgment everywhere else. The framework is scaffolding. It is not permanent. But what it builds in the person using it persists after the scaffolding comes down, beyond the allure of shortcuts.

Will Better AI Close the Gap?

The counterargument writes itself: better AI will close the gulf. Models will learn to interpret vague intent the way a good colleague does. The struggle will become unnecessary. Building frameworks around a temporary UX gap is like teaching Boolean search operators in 2005.

This objection is partially right. AI systems already handle ambiguity better than they did eighteen months ago. The gulf is narrowing from the technology side.

But the cognitive value does not depend on the gulf persisting. Even when AI understands you perfectly, you still benefit from understanding yourself. Knowing what you want before you ask for it. Knowing how to evaluate what you receive. Knowing when to override a confident answer. These are not prompting skills. They are thinking skills. They remain valuable whether the tool requires them or not.

The question is whether AI interaction becomes an opportunity to develop these skills or a way to avoid them entirely. The tool can function as either. Which one it becomes is not a technology question. It is an adoption question.

AI as a Mirror for How We Think

AI is not a particularly good mirror. It reflects what you give it, filtered through training data and probability distributions. It cannot tell you what you should want or whether your assumptions are sound.

But it is an unusually honest one. When your prompt produces garbage, the problem is usually that your thinking was vague. When an output surprises you, the surprise often reveals an assumption you didn’t know you held. When you cannot explain why a result is wrong, even though you know it is, you’ve found the exact edge of your tacit knowledge.

These moments are uncomfortable. They are also the most direct glimpse available into how you actually think.

The gap between what you know and what you can say is not a problem AI created. AI made it visible. What you do with that visibility is the choice that will compound.